ChatGPT Trusted Contact is OpenAI’s latest safety feature, allowing users to nominate a friend or family member to be alerted during a mental health crisis. Recognizing that users increasingly turn to AI for emotional support, this optional tool allows adult users to nominate a friend, family member, or caregiver. If ChatGPT’s automated systems detect conversations indicating a serious risk of self-harm or suicide, the flagged messages are evaluated by trained human reviewers. If the risk is verified, the system will notify the trusted contact, bridging the gap between artificial intelligence and real-world human care.

How the ChatGPT Trusted Contact Feature Works

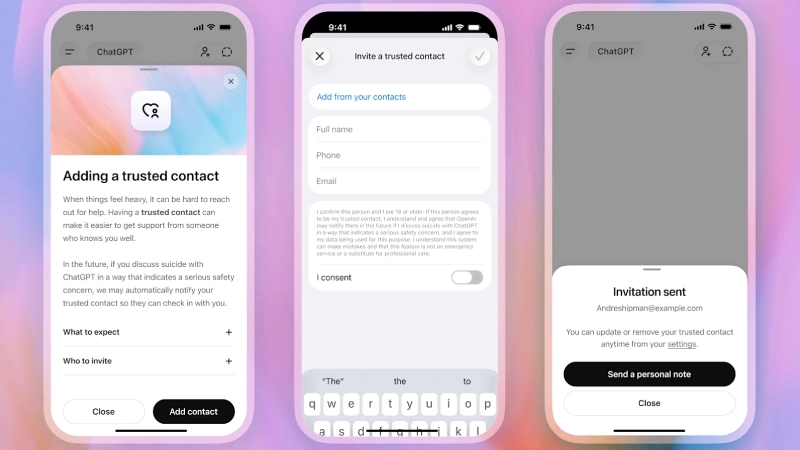

According to OpenAI, Trusted Contact is an optional setting available inside ChatGPT. Adult users can select one trusted individual who may be contacted in an emergency.

The process works in several stages:

- The User selects a trusted contact

- The contact receives an invitation to participate

- The Contact must accept within one week

The system requires monitoring to detect critical safety threats. The system sends alerts only after human reviewers access the situations that have been marked for review. The trusted contact receives an email and SMS notification through the ChatGPT app when reviewers find a situation to be high risk. OpenAI provides only limited notification capabilities to its users. The company does not share full chat transcripts or detailed conversation histories with the trusted contact. The Crisis response mission requires people to establish direct human relationships.

OpenAI developed this feature to inspire people to provide support to others who experience emotional crises. The company describes “trusted contact” as an additional layer of protection alongside existing grip prices, resources and localized help helplines are already available in ChatGPT. The company also stated that the system was developed with input from mental health experts, including members of the American Psychological Association and OpenAI’s Global Physician Network. The broader idea behind the feature is that a system should not attempt to replace human care during mental health emergencies. The technology connects users with people who were to exist in their personal relationships.

The technology industry has reached a point where companies now seek to combine AI help with human control and decision-making processes. The trusted contact system now exists because of rising worries about how users create emotional links with AI chatbots. The two previous years have shown that AI assistance improves conversational abilities while responding to human emotions and is integrated into the daily lives of users. Some users now depend on chatbots to provide emotional support and personal guidance. Along with social interaction. OpenAI uses the trusted contact feature to develop better safety systems that protect users who engage in emotionally charged discussions.

Privacy And Safety Concerns

Experts consider the feature to be an effective safety solution, but the feature creates two main issues, which deal with privacy and artificial intelligence surveillance. The first group of critics holds that users will experience discomfort. Because they must face the possibility that their talks will lead to human monitoring systems and emergency alerts. The second is that people who face emotional distress will hide their feelings because they worry about others who might come to help them.

OpenAI has emphasized that:

- The feature is entirely optional

- Users must obtain trusted contacts’ approval to use Trusted Contacts

- Trained personnel evaluate alerts through human review processes

- The notification system does not share chat information

The launch demonstrates how artificial intelligence systems now control crucial parts of users’ private lives.

Mental Health Support and the Role of AI

Industry experts and researchers are focused on examining how AI chatbots interact with human users who need mental health assistance. The Trusted Contact feature reflects a larger shift in how AI systems are being used. People use chatbots now for more than productivity tasks and information searches. Users today interact with artificial intelligence systems as interactive partners who can share their personal emotional experiences.

The growing emotional importance of ChatGPT for users requires acknowledgement, and this feature aims to build better protection systems.

Conclusion

OpenAI has introduced its Trusted Contact feature. This extends mental health safety functions throughout its ChatGPT application. The company has developed a system that enables users to select contacts who will receive alerts during critical emotional situations. The feature demonstrates her modern users. Now, the internet has an artificial inflation system because they use these systems to manage personal emotions. Open AI and other companies will encounter new challenges about privacy and safety, emotional dependence and mental health support through their products. As artificial intelligence becomes more embedded in daily life.